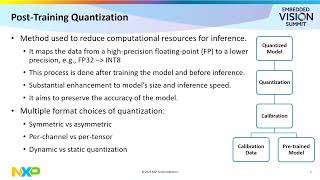

Web Reference: Jul 30, 2024 · In this blog, we present an end-to-end Quantization-Aware Training (QAT) flow for large language models in PyTorch. We demonstrate how QAT in PyTorch can recover up to 96% of the accuracy degradation on hellaswag and 68% of the perplexity degradation on wikitext for Llama3 compared to post-training quantization (PTQ). Feb 9, 2026 · This paper presents the first systematic study of 4-bit quantization-aware training (QAT) for attention. We find that "drop-in" QAT, which naively combines an FP4 forward pass with a high-precision Flash Attention (FA)-style backward pass, leads to training instability. Learn how Quantization Aware Training (QAT) improves large language model efficiency by simulating low-precision effects during training. Explore QAT steps, implementations in PyTorch and TensorFlow, and key use cases that help deploy accurate, optimized models on edge and resource-limited devices.

YouTube Excerpt: Let's dive deeper into quantization specifically

Information Profile Overview

Quantization Aware Training Qat With - Latest Information & Updates 2026 Information & Biography

Details: $57M - $68M

Salary & Income Sources

Career Highlights & Achievements

Assets, Properties & Investments

This section covers known assets, real estate holdings, luxury vehicles, and investment portfolios. Data is compiled from public records, financial disclosures, and verified media reports.

Last Updated: April 5, 2026

Information Outlook & Future Earnings

Disclaimer: Disclaimer: Information provided here is based on publicly available data, media reports, and online sources. Actual details may vary.