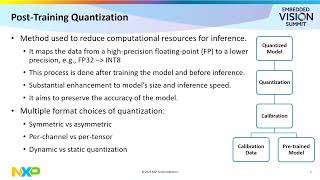

Web Reference: Feb 3, 2024 · This page provides an overview on quantization aware training to help you determine how it fits with your use case. To dive right into an end-to-end example, see the quantization aware training example. What is quantization aware training (qat)? Quantization aware training (QAT) is a method of quantization that integrates weight precision reduction directly into the pretraining or fine-tuning process of large language models (LLMs). Jul 30, 2024 · Learn how to use Quantization-Aware Training (QAT) in PyTorch to improve the accuracy and performance of large language models. See the QAT APIs in torchao and torchtune, and the results on Llama3-8B and XNNPACK.

YouTube Excerpt: In this video I will introduce and explain

Information Profile Overview

Quantization Aware Training - Latest Information & Updates 2026 Information & Biography

Details: $76M - $106M

Salary & Income Sources

Career Highlights & Achievements

Assets, Properties & Investments

This section covers known assets, real estate holdings, luxury vehicles, and investment portfolios. Data is compiled from public records, financial disclosures, and verified media reports.

Last Updated: April 5, 2026

Information Outlook & Future Earnings

Disclaimer: Disclaimer: Information provided here is based on publicly available data, media reports, and online sources. Actual details may vary.