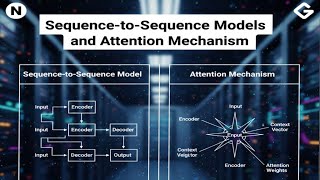

Web Reference: Sep 12, 2025 · The attention mechanism, introduced by Bahdanau et al. in 2014, significantly improved sequence-to-sequence (seq2seq) models. In this post, you’ll learn how to build and train a seq2seq model with attention for language translation, focusing on: In the following, we will first learn about the seq2seq basics, then we'll find out about attention - an integral part of all modern systems, and will finally look at the most popular model - Transformer. Of course, with lots of analysis, exercises, papers, and fun! Oct 14, 2025 · This study shows that foundational architectures in machine learning, sequence-to-sequence models with attention, mirror mechanisms of human memory.

YouTube Excerpt: Welcome to a pivotal video in our NLP module: Sequence-to-Sequence

Information Profile Overview

Seq2seq Models Attention How Ai - Latest Information & Updates 2026 Information & Biography

Details: $65M - $94M

Salary & Income Sources

Career Highlights & Achievements

Assets, Properties & Investments

This section covers known assets, real estate holdings, luxury vehicles, and investment portfolios. Data is compiled from public records, financial disclosures, and verified media reports.

Last Updated: April 3, 2026

Information Outlook & Future Earnings

Disclaimer: Disclaimer: Information provided here is based on publicly available data, media reports, and online sources. Actual details may vary.