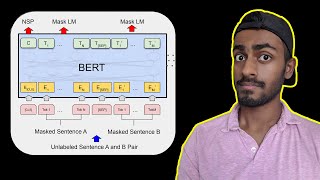

Web Reference: Bidirectional encoder representations from transformers (BERT) is a language model introduced in October 2018 by researchers at Google. [1][2] It learns to represent text as a sequence of vectors using self-supervised learning. It uses the encoder-only transformer architecture. Sep 11, 2025 · After the pre-training phase, the BERT model, armed with its contextual embeddings, is fine-tuned for specific natural language processing (NLP) tasks. This step tailors the model to more targeted applications by adapting its general language understanding to the nuances of the particular task. May 13, 2024 · As a language model, BERT predicts the probability of observing certain words given that prior words have been observed. This fundamental aspect is shared by all language models, irrespective of their architecture and intended task.

YouTube Excerpt: Since its introduction in 2018, the

Information Profile Overview

Language Processing With Bert The - Latest Information & Updates 2026 Information & Biography

Details: $82M - $118M

Salary & Income Sources

Career Highlights & Achievements

Assets, Properties & Investments

This section covers known assets, real estate holdings, luxury vehicles, and investment portfolios. Data is compiled from public records, financial disclosures, and verified media reports.

Last Updated: April 5, 2026

Information Outlook & Future Earnings

Disclaimer: Disclaimer: Information provided here is based on publicly available data, media reports, and online sources. Actual details may vary.