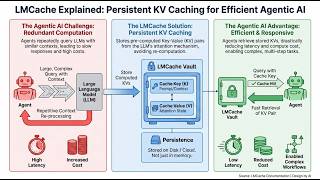

Web Reference: Jan 30, 2025 · Key-Value caching is a technique that helps speed up this process by remembering important information from previous steps. Instead of recomputing everything from scratch, the model reuses what it has already calculated, making text generation much faster and more efficient. Mar 12, 2026 · KV caching is one of the most important techniques used to accelerate LLM inference. By storing previously computed attention values, modern inference engines avoid recomputing tokens and dramatically improve generation speed and efficiency. Oct 1, 2025 · Behind the scenes, a clever optimization called “KV cache” is working overtime to make your conversation feel responsive. Without it, you’d be waiting several times longer for each response.

YouTube Excerpt: KV Cache KV Cache Explained

Information Profile Overview

Kv Cache Explained Speed Up - Latest Information & Updates 2026 Information & Biography

Details: $74M - $112M

Salary & Income Sources

Career Highlights & Achievements

Assets, Properties & Investments

This section covers known assets, real estate holdings, luxury vehicles, and investment portfolios. Data is compiled from public records, financial disclosures, and verified media reports.

Last Updated: April 5, 2026

Information Outlook & Future Earnings

Disclaimer: Disclaimer: Information provided here is based on publicly available data, media reports, and online sources. Actual details may vary.

![KV Caching: Speeding up LLM Inference [Lecture] Profile](https://i.ytimg.com/vi/_quDGLpNols/mqdefault.jpg)