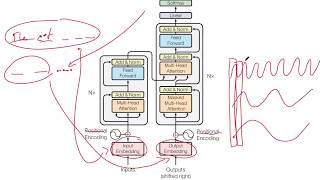

Web Reference: " Attention Is All You Need " [1] is a 2017 research paper in machine learning authored by eight scientists working at Google. May 15, 2025 · Find out if you need a power transformer based on your voltage, capacity, and power distribution needs. Learn how power transformers support efficient transfer. Jun 12, 2017 · The dominant sequence transduction models are based on complex recurrent or convolutional neural networks in an encoder-decoder configuration. The best performing models also connect the encoder and decoder through an attention mechanism. We propose a new simple network architecture, the Transformer, based solely on attention mechanisms, dispensing with recurrence and convolutions entirely ...

YouTube Excerpt: Lex Fridman Podcast full episode: https://www.youtube.com/watch?v=cdiD-9MMpb0 Please support this podcast by checking out ...

Information Profile Overview

Do You Need Transformers I - Latest Information & Updates 2026 Information & Biography

Details: $2M - $8M

Salary & Income Sources

Career Highlights & Achievements

Assets, Properties & Investments

This section covers known assets, real estate holdings, luxury vehicles, and investment portfolios. Data is compiled from public records, financial disclosures, and verified media reports.

Last Updated: April 3, 2026

Information Outlook & Future Earnings

Disclaimer: Disclaimer: Information provided here is based on publicly available data, media reports, and online sources. Actual details may vary.